School of Computer Science and Engineering, South China University of Technology, Guangzhou 510006, China

-

-

Abstract

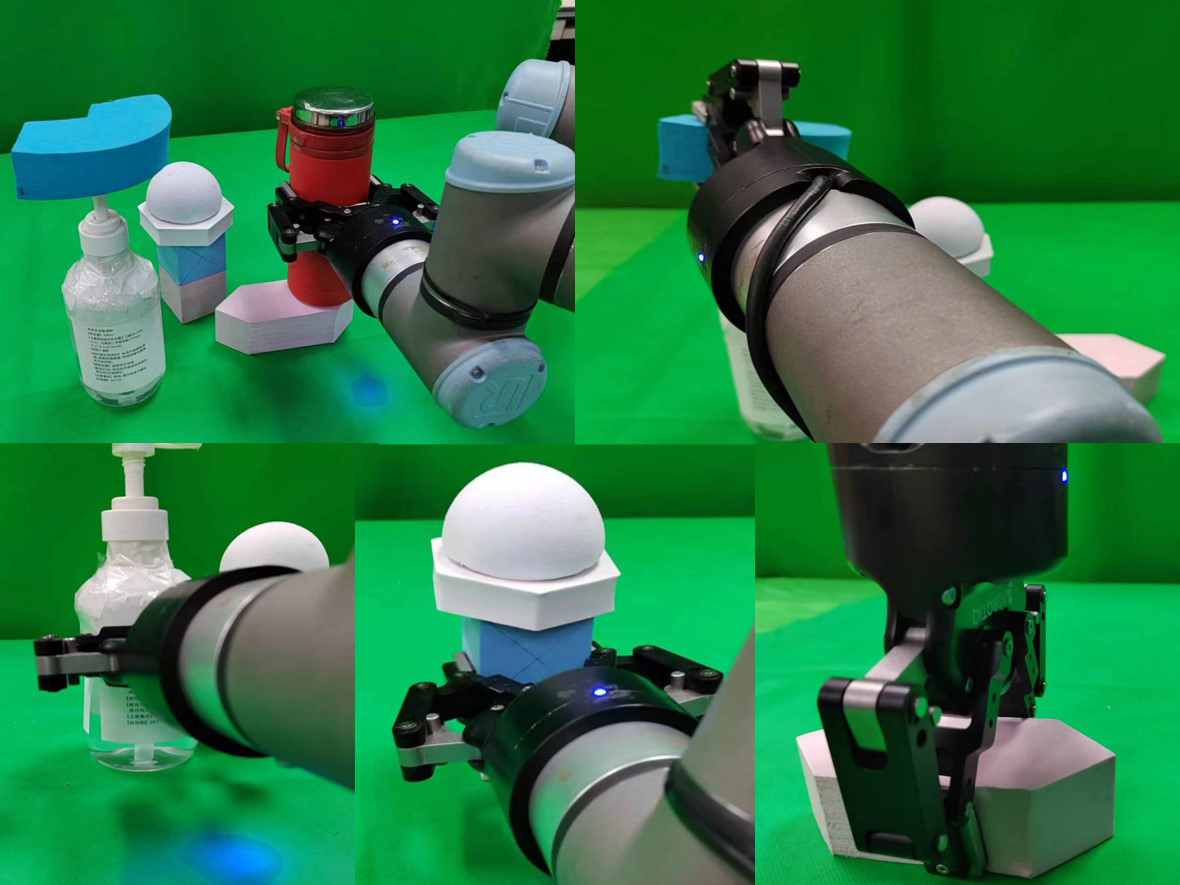

In the task of grasping irregular objects, the transported objects may shake and fall off due to their complex and diverse shapes and structures. For these issues, a robotic grasping technology based on shape analysis and probabilistic reasoning is proposed. Firstly, the dispersivity and flatness of the object’s point cloud are analyzed to generate a set of candidate grasping poses. Then, the factors influencing the shaking and falling off of the object are qualitatively analyzed in the simulation scenario, and the number of successful grasping and rotation-translation experiments is statistically counted in the simulation. The stability of the grasp pose is quantitatively analyzed using the conditional expectation method, and a PointNet discriminator is trained to evaluate and rank the candidate grasp poses. The grasping is ultimately completed with the optimal grasp pose. The experimental results indicate that the proposed method can solve the issue of shaking and falling off of irregular objects during the grasping and transporting process. Compared with the benchmark method, the average grasping success rate is improved to 89.2%, an increase of 2.6%, and the average transportation stability is enhanced to 84.2%, an increase of 22.7%. The proposed method enables intelligent grasping of objects in multi-object stacking scenarios, ensuring stability during the grasping and transporting process, and establishing a logical sequence for grasping.Keywords:

-

References

[1] 陈淑婷. 中国工业机器人产业创新网络演化研究[D]. 广州: 广州大学, 2022. doi: 10.27040/d.cnki.ggzdu.2022.000652CHEN S T. The research on innovation network evolution of Chinese industrial robot industry[D]. Guangzhou: Guangzhou University, 2022. doi: 10.27040/d.cnki.ggzdu.2022.000652[2] 潘静楠. 人口年龄结构老化、劳动力流动与机器换人[D]. 杭州: 浙江大学, 2022. doi: 10.27461/d.cnki.gzjdx.2022.000490PAN J N. Aging of population age structure, labor migration and robot replacement[D]. Hangzhou: Zhejiang University, 2022. doi: 10.27461/d.cnki.gzjdx.2022.000490[3] 刘亚欣, 王斯瑶, 姚玉峰, 等. 机器人抓取检测技术的研究现状[J]. 控制与决策, 2020, 35(12): 2817-2828. doi: 10.13195/j.kzyjc.2019.1145LIU Y X, WANG S Y, YAO Y F, et al. Recent researches on robot autonomous grasp technology[J]. Control and Decision, 2020, 35(12): 2817-2828. doi: 10.13195/j.kzyjc.2019.1145[4] ZHANG H B, TANG J, SUN S G, et al. Robotic grasping from classical to modern: A survey[DB/OL]. [2024-02-01]. https://arxiv.org/abs/2202.03631[5] DENG Z, JONETZKO Y, ZHANG L, et al. Grasping force control of multi-fingered robotic hands through tactile sensing for object stabilization[J]. Sensors, 2020, 20(4). doi: 10.3390/s20041050[6] MATAK M, HERMANS T. Planning visual-tactile precision grasps via complementary use of vision and touch[J]. IEEE Robotics and Automation Letters, 2023, 8(2): 768-775. doi: 10.1109/LRA.2022.3231520[7] SIDDIQUI M S, COPPOLA C, SOLAK G, et al. Grasp stability prediction for a dexterous robotic hand combining depth vision and haptic Bayesian exploration[J]. Frontiers in Robotics and AI, 2021, 8. doi: 10.3389/frobt.2021.703869[8] CHEN M Q, LI S D, SHUANG F, et al. Development of a three-fingered multi-modality dexterous hand with integrated embedded high-dimensional sensors[J]. Journal of Intelligent & Robotic Systems, 2023, 108. doi: 10.1007/s10846-023-01875-6[9] XIE Z, LIANG X, ROBERTO C. Learning-based robotic grasping: A review[J]. Frontiers in Robotics and AI, 2023, 10. doi: 10.3389/frobt.2023.1038658[10] DU G G, WANG K, LIAN S G, et al. Vision-based robotic grasping from object localization, object pose estimation to grasp estimation for parallel grippers: A review[J]. Artificial Intelligence Review, 2021, 54(3): 1677-1734. doi: 10.1007/s10462-020-09888-5[11] OUYANG W X, HUANG W H, MIN H S. Robot grasp with multi-object detection based on RGB-D image[C]// China Automation Congress. Piscataway, USA: IEEE, 2021: 6543-6548. doi: 10.1109/CAC53003.2021.9728678[12] ZHANG S T, GUO Z C, HUANG J, et al. Robotic grasping position of irregular object based Yolo algorithm[C]// International Conference on Automation, Control and Robotics Engineering. Piscataway, USA: IEEE, 2020: 642-646. doi: 10.1109/CACRE50138.2020.9229933[13] LIU D, TAO X T, YUAN L H, et al. Robotic objects detection and grasping in clutter based on cascaded deep convolutional neural network[J]. IEEE Transactions on Instrumentation and Measurement. 2022, 71. doi: 10.1109/TIM.2021.3129875[14] MAHLER J, LIANG J, NIYAZ S, et al. Dex-Net 2.0: Deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics[DB/OL]. (2017-08-08) [2024-02-01]. https://arxiv.org/abs/1703.09312.[15] LIANG H Z, MA X J, LI S, et al. PointNetGPD: Detecting grasp configurations from point sets[C]// International Conference on Robotics and Automation. Piscataway, USA: IEEE, 2019: 3629-3635. doi: 10.1109/ICRA.2019.8794435[16] DUAN H N, WANG P, HUANG Y Y, et al. Robotics dexterous grasping: The methods based on point cloud and deep learning[J]. Frontiers in Neurorobotics, 2021, 15. doi: 10.3389/fnbot.2021.658280[17] 邬金.论异形液体容器造型及其销售包装设计[D].苏州: 苏州大学, 2018. https://cdmd.cnki.com.cn/Article/CDMD-10285-1018146406.htmWU J. On the design of the shaped liquid containers and their sales packaging design[D]. Suzhou: Soochow University, 2018. https://cdmd.cnki.com.cn/Article/CDMD-10285-1018146406.htm[18] 朱枭. 基于多目视觉的异形瓶标签图像高速拼接系统研究[D]. 上海: 上海电机学院, 2023. doi: 10.27818/d.cnki.gshdj.2023.000110ZHU X. Fast image stitching system for irregular bottle based on multi-view stereo vision[D]. Shanghai: Shanghai Dianji University, 2023. doi: 10.27818/d.cnki.gshdj.2023.000110[19] MANUELLI L, GAO W, FLORENCE P, et al. KPAM: KeyPoint affordances for category-level robotic manipulation[C]// International Symposium of Robotics Research. Cham, Switzerland: Springer, 2022: 132-157. doi: 10.1007/978-3-030-95459-8_9[20] DONG H X, ZHOU J D, QIU C, et al. Robotic manipulations of cylinders and ellipsoids by ellipse detection with domain randomization[J]. IEEE/ASME Transactions on Mechatronics, 2023, 28(1): 302-313. doi: 10.1109/TMECH.2022.3193895[21] WEN B, LIAN W, BEKRIS K, et al. CaTGrasp: Learning category-level task-relevant grasping in clutter from simulation[C]// International Conference on Robotics and Automation. Piscataway, USA: IEEE, 2022: 6401-6408. doi: 10.1109/ICRA46639.2022.9811568[22] CHARLES R Q, HAO S, MO K, et al. PointNet: Deep learning on point sets for 3D classification and segmentation[C]// IEEE Conference of Computer Vision and Pattern Recognition. Piscataway, USA: IEEE, 2017: 77-85. doi: 10.1109/CVPR.2017.16[23] XIANG Y, SCHMIDT T, NARAYANAN V, et al. PoseCNN: A convolutional neural network for 6D object pose estimation in cluttered scenes[DB/OL]. (2018-05-26) [2024-02-01]. https://arxiv.org/abs/1711.00199.[24] TEN PAS A, GUALTIERI M, SAENKO K, et al. Grasp pose detection in point clouds[J]. International Journal of Robotics Research, 2017, 36(13-14): 1455-1473. doi: 10.1177/0278364917735594 -

Related Articles

-